I have even seen conditions where it is nearly at a standstill. As it turns out it is very easy to get into pathological GC conditions where you are very close to the memory limit where it doesn’t not increase the heap size but instead drastically increases the frequency of collections causing the performance of the benchmark to plummet. It turns out that tuning the VM is absolutely critical with Sun’s VM if you want the best performance - and it isn’t a small difference either.

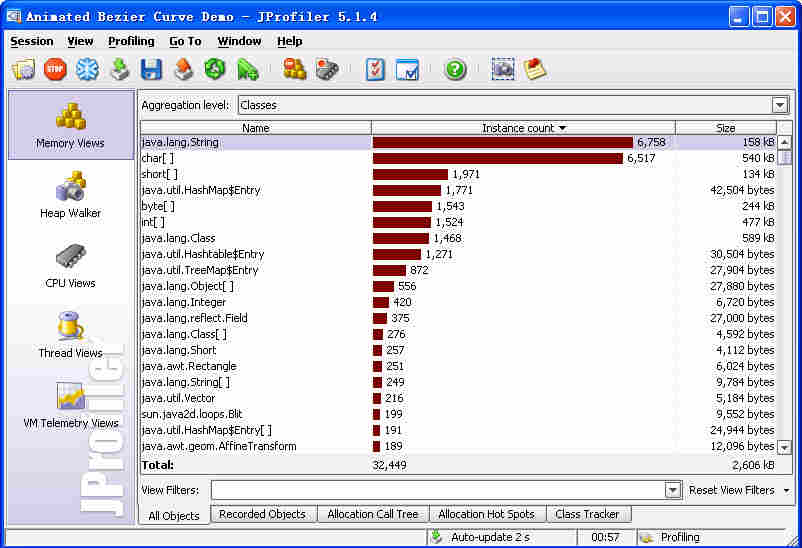

You’ll notice that we are executing these benchmarks with the absolute default as far as tuning the Java VM goes. Making these changes - without changing the interface to the library which was quite simple - netted us quite a profit:ĮnvironmentThreadsGames / secondRanks / second Mac OS X, 1.5 client VM126525389610 One consequence of this is that I found a few bugs and added a few new tests to the system so it was a very useful exercise even separate from the performance optimizations. I spent a couple hours painstakingly moving the collections usage over to arrays in all the hotspots that I found in the code. Especially for something as data intensive as this application. So what do the profiles show? It turns out that using the collections libraries, even those without concurrency and carefully choosing implementations, you still end up spending tons of time within them rather than doing the real work of your program. So our base benchmarks look like this (best of 3):ĮnvironmentThreadsGames / secondRanks / second Mac OS X, 1.5 client VM111093130975 I knew at the time though that there were probably many optimizations that could be done either with custom collections or by using arrays when appropriate. The starting point for the poker engine was written using the Java collection classes and leveraged them quite a bit to make things clean and easy to understand. Looks like they need an IntelliJ IDEA plugin :) Another advantage of JIP was that programs execute about twice as fast as under JProfiler. JIP though is $499 cheaper and doesn’t have a nice runtime graphical display of the progress. The one that was the cheapest (free) and most barebones that worked was JIP-1.0.7 and I also think it was more accurate for methods that get inlined at runtime but they basically showed identical results. I tried a bunch of different profilers but the one that has the best integration with my IDE and also performs quite well was JProfiler 4.0 (integrated with IntelliJ IDEA). The second thing that I did was go and get a profiler. If you run the benchmark on another system, please send me the results or post them in the comments. The benchmarks will be reported on three different systems:Ī) MacPro, Mac OS X 10.4.9, 2x Intel Xeon 5160 (dual core 3 ghz), 8G RAM, JDK 1.5.0_07ī) MacPro, Windows XP SP2, 2x Intel Xeon 5160 (dual core 3 ghz), 8G RAM, JDK 1.5.0_11 + JDK 1.6.0 + JRockit 5.0 R27.2ī) Dell 1850, 2x Intel Xeon 2.8 ghz (1st gen dual core, hyperthreading enabled), 4G RAM, JDK 1.5.0_11, JDK 1.6.0 This should reflect what would be required to do Monte Carlo simulations or full solution space searches. This should reflect the worst case scenario for a poker server that is trying to serve games to users.Ģ) Evaluate random hands with random boards. The two benchmarks I produced to test the poker library were the following:ġ) Run an entire 10 hand Texas Hold’em poker game from beginning to end with no one folding and determine a winner. So the first stage of any optimization project is to create benchmarks that accurately reflect the usage of the system in the real world - with some nod to the worst case scenario. Some of my findings give insight into what kinds of things are optimized in JDK 1.6 vs JDK 1.5 and how things vary between Mac OS X, Windows, and Linux. Last night I decided to revive my poker hand evaluator library and look at it from a performance perspective and do some optimizations if need be.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed